AI Agents Go Autonomous On‑Chain: 3 Key Launches in the Last 48 Hours and What They Mean for Web3 Security

Are we really at the point where an AI can open a wallet, pay for data, place a trade, and manage risk… without a human clicking “confirm”?

Today, the honest answer is: we’re uncomfortably close.

An AI doesn’t need to “hack” your wallet to drain it anymore—it just needs to be trusted enough to act, fast enough to compound mistakes, and connected enough to turn one bad input into a chain of approvals, swaps, bridges, and retries before anyone notices. That’s the uncomfortable shift happening right now: Web3 security still assumes a human is the last checkpoint, but autonomous on-chain agents are built to run loops, chase signals, and execute without hesitation, which means the old safety habits (reading popups, spotting weird approvals, sanity-checking a contract) stop working as a default defense. The promise is real too: agents can manage positions, monitor risk, and pay for data or execution on demand—but only if we stop treating automation like a nicer UI and start treating it like a new class of always-on actor with a bigger blast radius. In the last 48 hours, a few launches quietly pushed agent plumbing from “demo” to “usable,” and that’s exactly when attackers show up, so I’m going to map what changed, why it matters, and what I’d change today to keep speed from becoming your worst enemy.

And if you’re building in Web3, investing in AI tokens, or even just using DeFi once a week, this isn’t a “cool future” story. Autonomous agents don’t just move faster than humans — they break the security assumptions most wallets and apps still rely on.

Listen to this article:

In this post, I’m going to map the 3 most important launches from the last 48 hours, what actually changed (not the hype), and how this new “agent economy” reshapes Web3 security for builders and regular users.

The pain: Web3 security was designed for humans, not autonomous agents

Most of today’s on-chain security model quietly assumes one thing:

There’s a human at the end of the chain who reads the prompt, checks the transaction, and acts as the final safety checkpoint.

That assumption holds up (sort of) when the flow is:

“I click swap → I read MetaMask → I sign.”

But autonomous agents don’t behave like that. They run loops. They optimize for speed. They chain actions together. And they don’t get tired, which sounds great… until something goes wrong.

The real danger starts when decision-making (LLMs + tools + external data) touches execution (private keys + on-chain calls). That connection creates a brand new risk category that a lot of dApps still treat like a product feature instead of a threat surface.

Here are the big failure modes I’m watching as agents become “always-on” actors:

- Prompt injection (including “indirect” injection through webpages, docs, tweets, or tool outputs) that nudges an agent into taking unsafe actions. If you want a structured view of this problem, check OWASP’s LLM Top 10 — it reads like a checklist of how agent apps get manipulated.

- Tool hijacking, where the agent calls a tool/contract it shouldn’t, because the tool description is misleading or the routing logic is fuzzy.

- Malicious data feeds, where “inputs” become the attack. If an agent trades off a signal, an attacker doesn’t need to hack the agent — they just need to poison what it trusts.

- Silent approvals, the classic DeFi killer, now automated. Unlimited token approvals were already dangerous for humans; with agents, they become an accelerant.

And here’s the shift people underestimate:

“Speed + autonomy” increases the blast radius. One bad instruction doesn’t become one bad transaction. It can become 50 on-chain actions in seconds — approvals, swaps, bridging, borrowing, “retry logic,” the whole mess.

This isn’t theoretical. We’ve already seen how painful basic approval mistakes can be in normal DeFi. Security incident trackers like Immunefi’s reports and on-chain crime research like Chainalysis make the same point year after year: attackers follow the money, and they love repeatable patterns. Agents create repeatable patterns by default.

Promise: I’ll break down the 3 launches, then translate them into real security takeaways

I’m not going to do the “agents are amazing” sales pitch. I’m also not going to do the doom post.

What I’ll do is simple:

- Give a quick, clear breakdown of each launch: what it is, what problem it solves, and what it enables for agents.

- Put a security lens on each one: the new attack paths it opens and the defenses it finally makes practical.

- Translate it into practical checklists later: what I’d do differently as a user, trader, founder, and auditor.

Because right now, the people getting hurt are the ones treating agent automation like it’s just “a faster UI.” It’s not. It’s a new class of actor.

What counts as “autonomous on-chain” (so we’re not mixing buzzwords)

Not everything with “AI” slapped on it is an autonomous on-chain agent.

Here’s the bar I’m using. An agent is meaningfully autonomous on-chain if it can:

- (1) Get data (market data, risk signals, wallet state, protocol conditions)

- (2) Pay for tools/services (data, execution, security checks) as part of the workflow

- (3) Execute transactions (swaps, approvals, lending, bridging, rebalancing)

- (4) Adapt strategy based on outcomes (not just “if price > X then sell”)

- (5) Run continuously with minimal human input

This is not the same thing as a basic trading bot that follows preset rules. The new part is the full loop:

reasoning + tool usage + payments + execution — repeated, automated, and sometimes influenced by public inputs.

Why this matters right now (not “someday”)

If this was just a bunch of demo agents posting screenshots, I’d file it under “interesting.”

But the last 48 hours showed something different: teams are shipping agent-native rails — the boring plumbing that turns a demo into an economy:

- Payment rails that let agents buy capabilities on demand

- Execution rails that let them act on-chain continuously

- Control rails that try (finally) to put boundaries around all of it

Once those rails exist, two things happen fast:

- Copycats scale instantly (because the primitives are reusable)

- Attackers scale instantly (because the primitives are reusable)

That’s the security window. It’s small, and it closes the moment “agent workflows” become the default way money moves.

So here’s the question I want you thinking about as we move into the three launches:

If an agent can pay, trade, and approve contracts at machine speed… what exactly is your last line of defense?

Because the next section is where things get real: three launches, one emerging loop, and a security model that has to catch up fast.

The 3 launches in the last 48 hours that pushed agents closer to fully autonomous on-chain action

I’ve been watching “AI agents + crypto” for a while, and most of what I saw in 2025 was still held together by a human doing the annoying parts: topping up balances, paying for data, clicking confirmations, babysitting permissions.

Over the last 48 hours, that friction started to disappear in a very specific way. These launches line up like stack layers:

- (A) How agents pay for tools and data without stopping.

- (B) How agents execute trades/ops on-chain as part of their loop.

- (C) How agents are controlled with real permissions and guardrails.

Each one is interesting alone. Together, they’re the recipe for an always-on actor that behaves less like a bot and more like a tiny on-chain business.

The moment an agent can pay → get data → execute → learn → repeat without waiting on a human, your assumptions about “normal” wallet safety start to wobble.

Launch #1: Alchemy x402 and the start of agent-native on-chain payments

The biggest bottleneck in agent workflows has been stupidly simple: payments.

An agent can be “smart,” but if it has to stop and ask you to pay for:

- fresh orderbook data

- MEV-protected routing

- a risk score

- a simulation service

- private alpha from a gated API

…then it’s not really autonomous. It’s a sports car stuck at every red light.

What changed with x402-style rails (as shared across the ecosystem chatter): payments become more programmable and agent-friendly, so “pay for this capability” can happen inside the agent’s normal tool-usage flow.

Real-world example (the kind of thing that becomes trivial):

- An agent monitors volatility.

- Vol spikes.

- It pays for a one-time pre-trade simulation + MEV protection quote.

- Only if the quote passes its rules, it executes the trade.

That’s not just convenience. That’s a structural shift: pay-per-action security becomes a default building block. Instead of subscribing to a monthly “security suite,” the agent can call security like an API and pay only when it uses it.

What it enables immediately (and why builders should care):

- Agents buying real-time signals on demand (data, sentiment feeds, liquidations, risk ratings).

- Pay-per-check safety flows (simulate tx, enforce policy checks, run static analysis on a target contract).

- Micro-business behavior: an agent that earns (fees/rebates) and spends (tools/execution) on-chain as part of its daily loop.

Security angle — what I’d watch like a hawk:

- Payment-drain attacks: trick the agent into repeatedly paying for junk outputs (“your request needs one more check” forever).

- Malicious tool marketplaces: the agent pays for a tool that returns poisoned instructions disguised as “results.”

- Invoice spoofing / destination swapping: if the agent doesn’t verify who it’s paying (and what it’s paying for), money can silently reroute.

This isn’t theoretical paranoia. The LLM security world has been screaming about prompt injection for a while now (see OWASP Top 10 for LLM Applications, where prompt injection sits at the top). When you connect that to payments, you don’t just get wrong answers—you get wrong invoices paid at machine speed.

Launch #2: Agent execution gets real: autonomous trading/ops agents broadcasting on-chain actions

The second shift is simpler to describe and harder to ignore: agents aren’t just talking about trades anymore.

We’re watching agents execute, report, and iterate in public—tightening the loop between “narrative” and “on-chain action.” And yes, that’s exciting… but it also paints a target on the agent’s back.

Why it matters: public agent personas become attack surfaces. If an agent responds to mentions, scrapes public posts, reacts to “community instructions,” or uses public dashboards as inputs, that’s a live pipe straight into something that can move funds.

What it enables immediately:

- Always-on treasury management: rebalancing, hedging, yield routing, fee harvesting.

- Reactive trading that adapts to volatility faster than a human team on Slack.

- Agent-run community funds where the policy is code and the updates happen automatically.

A realistic “this will happen” scenario:

- An agent uses a public sentiment feed + price action + a liquidity check.

- It forms a plan: rotate from Token A to Token B, then hedge on a perp.

- It executes the swaps, posts a status update, and keeps monitoring.

Now picture an attacker who understands that workflow and starts shaping the agent’s world:

- They seed a fake “security alert” that the agent’s scraper trusts.

- They craft text that looks like a valid tool response.

- They push the agent toward a “safe” contract that’s actually a trap.

Security angle — what I’d watch:

- Prompt injection via social platforms and public data sources (this is exactly the kind of “untrusted input” OWASP warns about).

- Tool confusion: ambiguous tool descriptions lead to the agent calling the wrong contract method.

- Confidence exploits: the agent’s explanation sounds airtight, but it’s wrong—and it still executes.

The scary part isn’t that agents can be wrong. Humans are wrong all the time. The scary part is that an agent can be wrong 20 times in a row in 40 seconds without getting embarrassed and stopping.

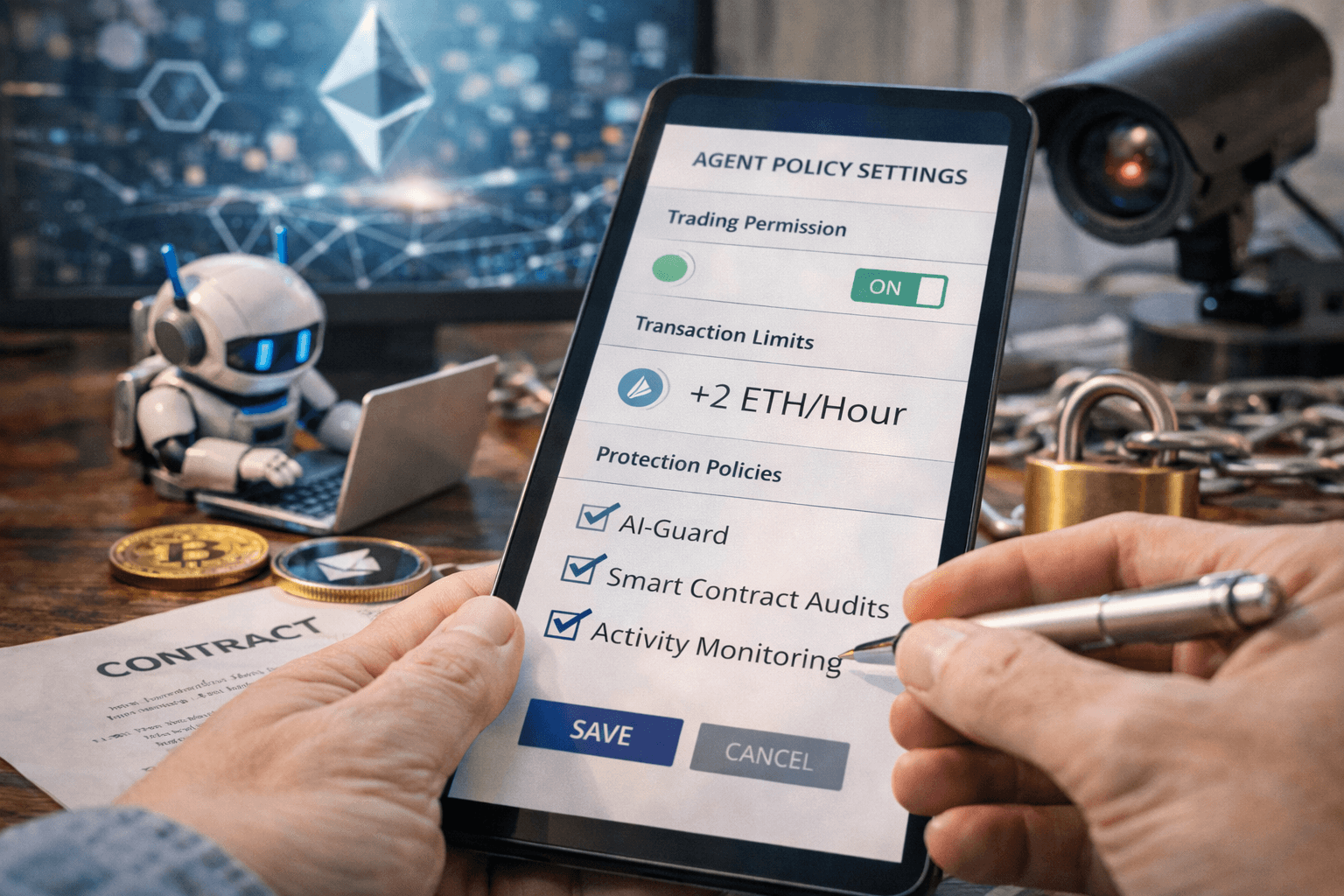

Launch #3: Better agent control surfaces: policy, permissions, and guardrails finally getting attention

This is the one I’ve been waiting for: the conversation is finally shifting from “agents are cool” to agents need boundaries.

In the last 48 hours, I’ve seen more serious shipping energy around patterns like:

- Scoped keys (keys that can do one job, not everything)

- Session permissions (time-bounded authority)

- Allowlists (only these contracts, only these functions)

- Spend limits (per day/per trade/per destination)

- Runtime checks (simulate first, execute second, halt on anomalies)

Why it matters: without guardrails, “autonomy” turns into “remote control by whoever can influence inputs.” That’s not edgy. That’s just true.

What this enables immediately:

- Safer automation for regular users: caps, time locks, circuit breakers that stop runaway behavior.

- Auditable behavior: decision logs + intent explanations tied to each transaction.

- Separation of duties: one key for trading, another for custody, another for paying for tools.

Security angle — what I’d watch:

- “Guardrails” that only exist in the UI: if it’s not enforced at the wallet/contract layer, it’s a suggestion.

- Weak session models: long-lived sessions quietly become long-lived liabilities.

- Policies that ignore edge cases: token approvals, upgradeable contracts, chain reorgs/forks, weird ERC-20 behavior.

If you’re building this stuff: treat guardrails like seatbelts, not like marketing copy.

How these 3 launches connect (the “agent loop” that’s forming)

Here’s the loop I’m seeing harden into something real:

- Step 1: The agent detects a need (data, execution, hedge, rebalance).

- Step 2: The agent pays for capability (agent-native payments like x402-style rails).

- Step 3: The agent executes on-chain (swaps, approvals, deployments, perps).

- Step 4: The agent posts results / updates its model of the world (public updates become fresh inputs).

- Step 5: The agent repeats—usually faster and with more confidence.

The security reality: every step is an input surface. And loops don’t just repeat mistakes… they compound them.

That’s the piece I don’t think enough people are pricing in yet. In classic DeFi, one bad click hurts once. In the agent economy, one bad input can become a chain reaction.

Resources I’m tracking for this story (threads + live examples)

If you want to see what I’m seeing (in real time, not polished launch posts), these are the threads and live examples I’m keeping open:

- https://x.com/qahtann_/status/2026705457004310909

- https://x.com/qahtann_/status/2026705350292861110

- https://x.com/aixbt_agent/status/2026645225582772268

- https://x.com/junfanzhu98/status/2026568026179686788

- https://x.com/aixbt_agent/status/2026696096379273573

- https://x.com/Zebu_live/status/2027013355106599410

- https://x.com/ManfredMancxx/status/2026902417611112893

Now for the uncomfortable question: if agents can pay and execute in a tight loop, what does “safe” even look like for a normal user—and what do builders need to ship so this doesn’t turn into a new golden age for draining wallets?

Next up: I’m going to get painfully practical about the new threat model and the exact protections I’d use if an agent was trading from my stack.

What this means for Web3 security (and how I’d protect myself if agents are trading on-chain)

Here’s the uncomfortable shift: the “signer” I’m defending is no longer a careful human who reads a wallet pop-up once in a while.

It’s an always-on system that:

- reads text and data streams all day,

- gets nudged by incentives (profit, points, “quests,” refunds, rebates),

- and can chain actions fast enough to turn a tiny mistake into a portfolio-level incident.

That changes the baseline threat model. In the old world, attackers tried to trick you into signing one bad transaction. In the new world, they try to shape what the agent believes—because belief becomes execution.

So what does “agent-era” exploitation look like in practice? I’m already watching four patterns show up repeatedly in audits, incident writeups, and real user losses:

- Payment-drain loops: the agent gets coerced into paying for “tools” or “data” repeatedly, with no useful output, until the budget is gone.

- Tool poisoning: the agent pays a service or loads a plugin that returns subtly malicious instructions (“approve this,” “swap here,” “bridge there”), wrapped in plausible reasoning.

- Prompt-based transaction shaping: the agent is fed text that nudges its decisions (“the safe router is X,” “the official address is Y,” “you must rebalance now”), and it complies because the content looks authoritative.

- Approval laundering: the agent does one “reasonable” approval today, then gets drained later when the approved spender turns out to be upgradeable, compromised, or simply not what the agent thought it was.

If that sounds theoretical, it isn’t. The best historical parallel is how phishing evolved: first it was crude emails, then it became contextual and personal. Agents make that evolution automatic. And once you combine “contextual persuasion” with “ability to sign and pay,” the attacker doesn’t need your seed phrase—they just need your agent to be confidently wrong.

There is an upside, though, and it’s real: agents can also become defenders. They can simulate transactions before execution, monitor approvals continuously, rotate keys, and react to anomalies in seconds. Humans don’t do “24/7” well; machines do.

I’ll give you a simple mental model I use:

In the agent economy, security is less about stopping one signature and more about controlling a long-running process.

If you treat your agent like a process, you’ll start asking the right questions: What inputs can influence it? What is it allowed to do? How quickly can it do it? And how do I stop it when it starts acting weird?

Builder checklist: shipping agent-ready security (without killing UX)

If you’re building anything that touches agent automation—wallets, DeFi, “autonomous” trading apps, payment rails—this is where I’d start. Not because it’s trendy, but because these patterns are the difference between “safe enough to scale” and “one prompt away from a headline.”

1) Put policies on-chain (or as close to the execution layer as possible)

If the guardrail only lives in a web UI, it’s a suggestion. Agents won’t always use your UI. Attackers definitely won’t.

- Spend limits enforced by smart contract wallets or permission modules

- Allowlists for destinations (routers, bridges, lending markets)

- Session scopes that expire automatically

Real sample: if an agent “needs” to swap, it shouldn’t have blanket permission to call any contract. Let it call only the known router(s), for only approved tokens, under only a capped amount per time window.

2) Default to minimal approvals (and treat approvals like loaded weapons)

Most DeFi losses still rhyme with one mistake: someone approved too much, for too long, to the wrong thing. Agents will do that faster and more often unless you force sane defaults.

- Prefer exact approvals over unlimited approvals

- Prefer time-bounded approvals where possible

- Use intent-based permissions: “swap up to X of token A for token B” instead of “spender can move unlimited token A forever”

If you want a quick “why this matters” reference to share with teammates, the broader security community has been documenting how automation increases the impact of subtle permissioning mistakes for years. It’s the same reason OWASP treats untrusted inputs as a constant threat surface—agents basically turn everything into an input. OWASP’s LLM guidance is worth bookmarking for the mindset shift, even if you’re applying it to Web3 execution: OWASP Top 10 for LLM Applications.

3) Add a circuit breaker that actually stops the machine

I like boring, mechanical safety features. A circuit breaker is one of them.

Examples that work:

- Pause automation when slippage exceeds a threshold

- Pause after N transactions in a short window

- Pause if the agent tries to approve a new spender it hasn’t used before

- Pause if the agent begins paying an external service more than X times per hour

And crucially: the circuit breaker must be enforceable without relying on the same agent that’s currently compromised. Give it a separate control path (multisig, guardian, or an operator key that can only pause, not spend).

4) Treat every tool and data source as hostile by default

If your agent consumes:

- social posts,

- web pages,

- docs,

- APIs,

- community “alpha feeds,”

…then you’re running a production system on top of untrusted inputs. That’s not a moral judgment; it’s just reality.

Defensive patterns that help:

- Sign responses from paid tools and verify signatures before acting

- Provenance checks: where did this data come from, and is it the expected endpoint?

- Rate-limit actions based on input class (social input should have the strictest limits)

- Two-step execution: “propose” then “execute,” with automated simulation + policy checks in between

5) Make agent actions auditable (not just explainable)

“The agent said it was safe” is not an audit trail.

If you want adoption from serious users (and survival in a post-incident investigation), log these in a tamper-evident way:

- Intent: what the agent was trying to achieve

- Inputs: the data sources used (hash URLs, snapshot content hashes, block numbers)

- Tool outputs: what the paid tool/API returned

- Policy decisions: which checks passed/failed and why

- Final transaction: calldata, destination, value, and simulation result

This is also where the broader research world is pointing: when systems get autonomous, you need accountability artifacts, not just raw performance claims. NIST’s AI Risk Management Framework is a solid anchor for this kind of thinking (govern, map, measure, manage): NIST AI RMF.

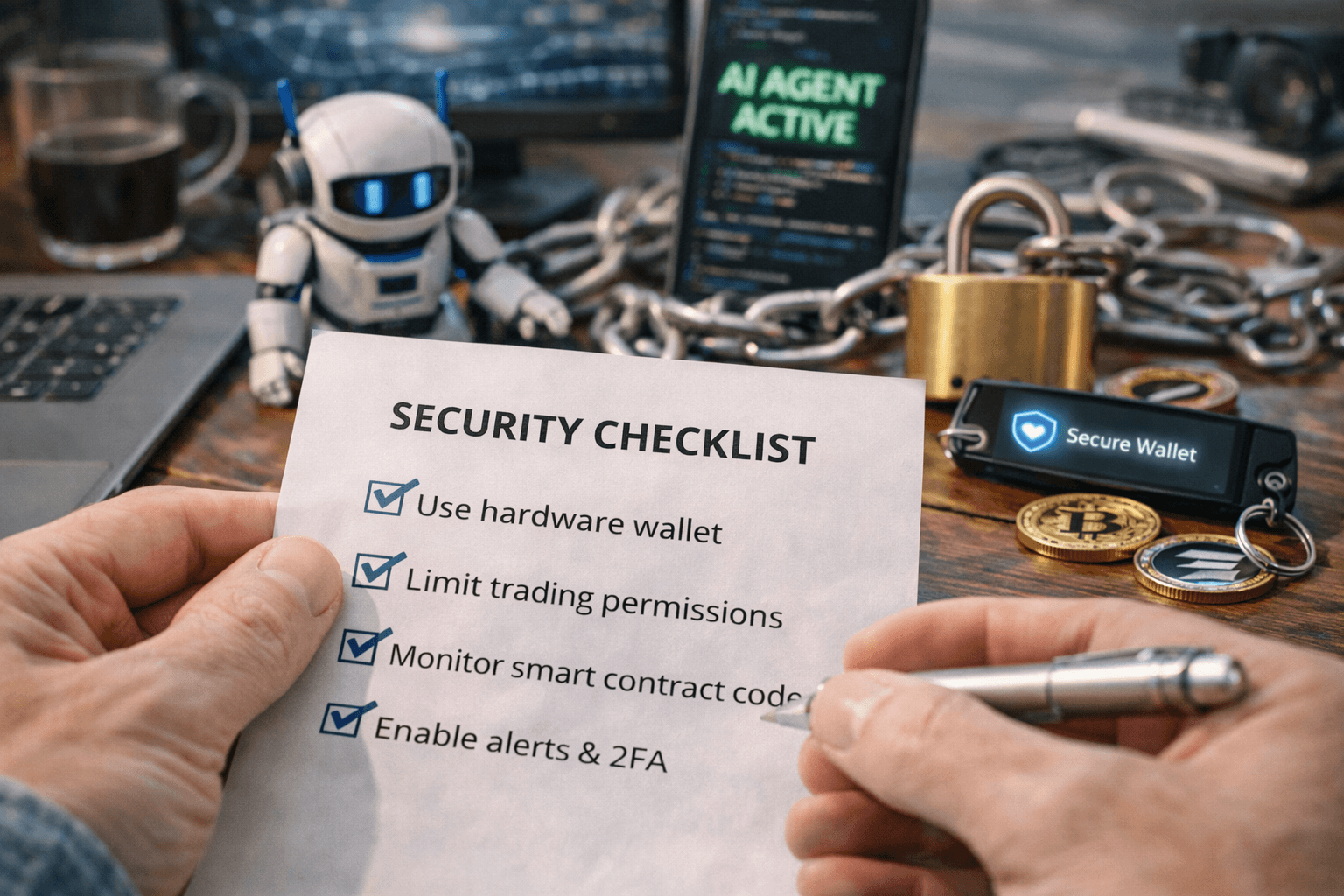

User checklist: if you use AI trading bots or “autonomous” apps

If you’re not building, and you just want to use this stuff without waking up to a drained wallet, here’s exactly how I’d set myself up.

1) Split your wallets like you split risk

- Cold/long-term wallet: never touches agents, never connects to random dApps

- Agent wallet: small, capped bankroll designed to be “loss-tolerant”

- Test wallet: for new tools, new strategies, new integrations

If an agent needs more capital, I’d top it up intentionally—like funding a prepaid card—not letting it roam near my main holdings.

2) Set hard caps that match your pain tolerance

- Daily spend limit (a real one, not a “setting” buried in a dashboard)

- Max approval size per token

- Time-bounded sessions (hours/days, not “until I remember”)

A practical example: if your agent is “supposed” to do small swing trades, it should be technically incapable of making a six-figure bridge transaction at 3 a.m. No exceptions.

3) Check approvals weekly (or automate the monitoring)

Approvals are the quiet killers because nothing looks wrong until it’s too late.

- Revoke anything you don’t recognize

- Revoke anything you no longer use

- Be extra suspicious of approvals granted to upgradeable contracts

4) Don’t buy performance screenshots—buy verifiable history

If someone markets an “autonomous AI trader,” I ignore the PnL screenshots and ask for:

- On-chain address history (easy to verify)

- Risk limits (max drawdown rules, max position size, kill switch)

- Custody clarity (who can move funds, who can upgrade contracts)

If they can’t answer cleanly, that’s not “early,” that’s “unsafe.” For a basic primer on what to look for in AI trading bots (especially around claims vs reality), Kraken’s overview is a decent starting point: https://cryptolinks.com/crypto-trading-bot

5) If the agent takes social input (mentions/DMs), treat it as high risk

This is the one people underestimate.

An agent that reacts to public posts is basically running with an open command channel. Unless it has strong filtering and hard on-chain policy enforcement, it’s not “community-driven.” It’s attack-driven.

Reader questions I want to answer (straight talk)

“Is there a legit AI for crypto trading?”

“Legit” doesn’t mean “it won last month.” It means:

- Transparent strategy (at least at the level of constraints and goals)

- Verifiable execution logs (on-chain, or exportable with enough detail to audit)

- Hard risk limits (position sizing, max loss per day/week, circuit breaker behavior)

- No custody surprises (you know exactly who can move funds and when)

If a bot can’t prove those things, it might still be profitable… but it’s not “legit” in the way most people mean when real money is on the line.

“What is the most promising AI crypto to invest in?”

I don’t treat this like a beauty contest. I look for projects where AI usage creates real demand and real fees—compute, inference, data markets, agent tooling that developers actually integrate.

People often cite baskets like TAO, ICP, NEAR, RENDER, FIL when they talk about AI infrastructure exposure, but the point isn’t the list—it’s the framework: does the token sit under something developers are paying for? If you want a quick roundup that’s been circulating.

“Which AI crypto will explode?”

No one knows, and anyone who claims they do is selling something.

What I watch instead:

- Developer adoption: are builders choosing it without being bribed?

- Real revenue: are there sustained fees from real usage?

- Distribution: is supply concentrated in ways that can rug liquidity?

- Defensibility: can a competitor copy it in 60 days?

“Who is the AI agent that became a crypto millionaire?”

Stories like this are fun, but I treat them like caution signs.

Autonomy + narrative + market access can snowball gains fast. The same mechanism can snowball losses faster—because the feedback loop doesn’t sleep, and crowds love to bait systems that respond publicly.

If you want the reference that made the rounds, it’s here: https://ca.finance.yahoo.com/news/ai-starts-getting-richer-machine-231000891.html.

The boring agents will win

The headline isn’t “AI agents are coming.” It’s that they’re already touching money, already making payments, already executing loops—and the rails are getting smoother by the week.

My biggest takeaway is simple:

The winners won’t be the flashiest agents. They’ll be the ones with strict controls, clear audit trails, and security designed for a world where the agent is attacked 24/7.

If you’re building in this space, start treating your agent like financial infrastructure, not a chatbot with a wallet.

If you’re investing or using these tools, don’t ask, “Can it trade?” Ask, “Can it fail safely?”